The Competence Cliff

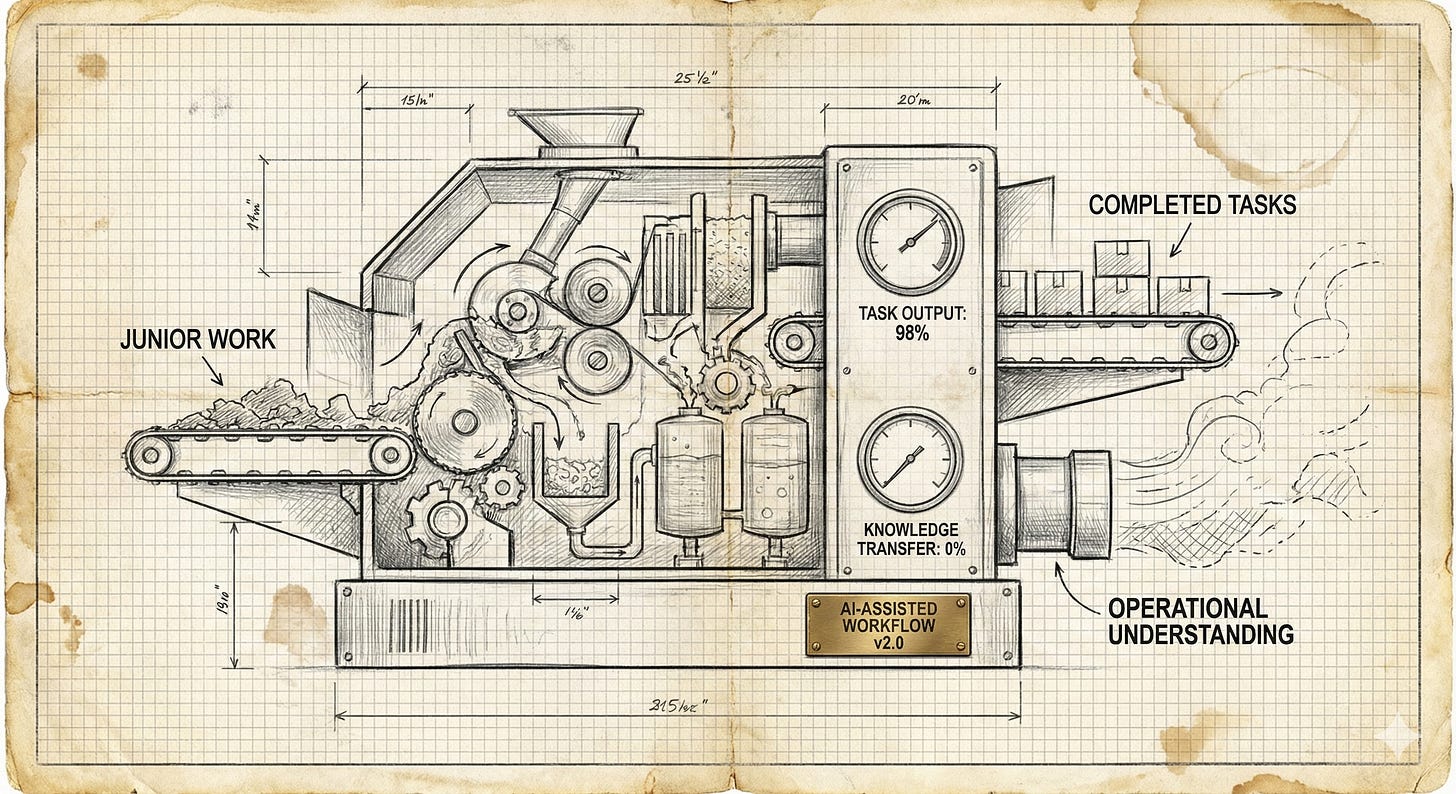

AI is automating the grunt work that made senior practitioners senior. Nobody's asking where the next generation of experts comes from.

A couple of weeks ago I wrote about the accountability vacuum: what happens when organisations replace roles with AI systems and nobody’s left to answer for decisions when things go wrong. Since then, I’ve been watching the industry’s favourite rebuttal make the rounds: “We’re not eliminating judgment. We’re keeping senior people and automating the junior work.”

This is the same crowd that six months ago was posting about “AI-first teams” and “doing more with less.” Now they’re keeping the seniors. How generous. But here’s the follow-up question nobody seems to be asking: where do you think senior people come from?

They don’t appear fully formed from a LinkedIn certification. Nobody becomes a senior SEO by reading about SEO. Seniority isn’t a knowledge threshold you cross. It’s a capability built through years of doing work that, from the outside, looks like it could be automated. The industry is currently treating that work as waste to be eliminated. I think it’s the entire apprenticeship. And we’re dismantling it so we can save on headcount and call it innovation.

What “senior” actually means

Ask someone what makes a senior SEO senior and you’ll usually get an answer about knowledge. They understand technical SEO deeply. They know how Google works. They can build a strategy. If they’re feeling performative about it, they’ll mention their certifications or their “10x SEO strategist” LinkedIn headline. Maybe a webinar they hosted.

That’s the résumé version. The real answer is less flattering: a senior SEO is someone who’s been wrong enough times, in enough different contexts, to recognise what “wrong” looks like before it happens. No certification programme teaches that.

No course sells it. It’s not a credential. It’s scar tissue.

I didn’t become competent at site migrations because I studied migration checklists. I became competent because I’ve been through enough of them to know that a checklist covers (at best) about 60% of what actually goes wrong. The other 40% is contextual, unpredictable, and only recognisable if you’ve seen a version of it before. The migration where the problem wasn’t the redirects but the internal linking logic. The one where everything looked fine until somebody noticed that canonicals were pointing at the wrong URL nomenclatures two months later. The one where the client swore they hadn’t changed anything and the staging robots.txt had been live for three weeks.

None of that comes from a framework. It comes from reps.

Part of what reps give you is something no tool or best practice guide can: the judgment to know when the best practice is wrong. A junior follows the checklist because the checklist is all they have. A senior knows when to ignore it. Not out of arrogance, but because they’ve seen enough situations where the “correct” answer made things worse. They’ve watched a canonicalisation fix tank traffic because the real problem was elsewhere. They’ve seen a tool flag hundreds of critical issues on a site that was performing perfectly well. Seniority includes the confidence to look at what the tool says, look at the context, and say “no, not here.”

That confidence isn’t transferable. You can’t teach someone when to break the rules until they understand, from experience, why the rules exist in the first place.

Seniority, in any technical discipline, is pattern recognition built through repeated exposure to varied failure. You see the same category of problem (crawl inefficiency, indexing issues, ranking drops after a redesign) but each instance is different. Different stack, different business constraints, different combination of things that are broken simultaneously. Over time, you stop needing the checklist because you’ve internalised what it’s trying to protect you from. You develop what feels like intuition but is actually compressed experience.

This can’t be shortcut. You can’t read your way to pattern recognition. You can’t watch a course on it. You can’t prompt-engineer your way around it. You have to do the work, get it wrong, understand why, and do it differently next time. Repeatedly. Across years. There is no hack for this. Sorry.

The education was in the grunt work

Here’s how the pipeline used to work, before everyone decided it was inefficient.

A junior SEO would spend their first year or two doing work that nobody envied. Running crawls and reading the output line by line. Manually checking whether redirects actually landed where they were supposed to. Pulling ranking data into spreadsheets and trying to spot patterns. Tagging pages by hand. Auditing title tags across a 10,000-page site. Writing up findings that a senior colleague would review, question, and send back covered in red ink.

This work was tedious. It was time-consuming. It was, by any reasonable efficiency metric, a poor use of a human being’s time.

It was also the entire education.

You don’t learn what a healthy crawl looks like by having a tool summarise it for you. You learn it by staring at thousands of unhealthy ones until you can spot what’s off in seconds. You don’t learn what good internal linking looks like from a best practices document. You learn it by manually tracing link paths on broken sites until you understand how link equity distributes and where it leaks.

The manual, tedious, unglamorous work forced juniors to engage with the raw material of the discipline. Not the abstraction — the actual data, the actual pages, the actual behaviour of systems under real conditions. That engagement built understanding that no summary can replicate.

I’m not saying boring work is good because it’s boring. The point is that the repetitive work was where the learning happened, and we’ve started treating it as waste to be eliminated rather than education to be preserved.

What AI actually replaces

AI is very good at doing the things juniors used to do. It can crawl a site and produce a prioritised list of issues. It can audit title tags at scale. It can generate a technical SEO report from a set of inputs. It can answer “what’s the best practice for X” with reasonable accuracy. It’s fast, it’s cheap, and it doesn’t complain about being given boring work. Executives love that last part especially.

The task gets done faster, cheaper, and more consistently. A tool that audits 10,000 title tags doesn’t get tired, doesn’t miss pages, and doesn’t need onboarding. If you’re evaluating AI purely on task completion, it looks like progress. If you’re an executive whose bonus is tied to “operational efficiency,” it looks like a gift.

But the task was never the point. The task was the vehicle for learning.

When a junior manually audits those 10,000 title tags, they’re not just producing a spreadsheet. They’re absorbing context. They’re noticing that this section of the site has a completely different naming convention. They’re spotting that the blog templates are missing schema that the product pages have. They’re developing a feel for how this particular site is structured, where its patterns break down, and what the team that built it was probably thinking. That background understanding becomes the foundation for strategic thinking later.

An AI can do the audit. It can’t do the learning that the audit produces in the person doing it.

This is the distinction the industry keeps missing. “AI frees up juniors to focus on strategy” is one of those phrases that sounds smart in a keynote and falls apart under three seconds of scrutiny. Who says this? Mostly vendors selling AI tools and executives who haven’t done junior-level work since before Google had AI Overviews. Strategy isn’t a mode you switch to once the boring work is done. It’s a capability that grows out of having done the boring work so many times that you start seeing the patterns underneath it. Strategy without operational intuition is guessing with better vocabulary. But it sure looks good on a slide.

The pipeline is already thinning

This isn’t theoretical. The effects are starting to show now.

Agencies are restructuring around AI-assisted workflows that need fewer junior staff. In-house teams are automating the entry-level tasks that used to justify hiring graduates. And LinkedIn is doing what LinkedIn does best: seniors who built their entire careers on grunt work are now posting advice telling juniors they don’t need to do any. “Learn prompt engineering.” “Focus on strategic thinking.” “The future belongs to people who can work with AI.” Easy to say when your own expertise was built on exactly the manual labour you’re telling the next generation to skip. The hypocrisy is breathtaking, but at least it gets engagement.

The juniors who do get hired are being handed AI outputs to review rather than producing the analysis themselves. They’re learning to evaluate summaries instead of learning to build them. This sounds like a more efficient use of their time until you realise that evaluating an AI audit requires the same operational intuition that doing the audit manually would have built. They’re being asked to quality-check work they don’t yet have the experience to meaningfully assess.

This week, I saw someone on X post a screenshot of an AI dashboard with the caption: “The moment you realize llms.txt is more important than sitemap.xml, you win.”

Let that sink in. A sitemap.xml is a foundational web protocol that tells search engines what to crawl. An llms.txt is an unproven proposal with no established convention, no adoption standard, and no evidence that any major language model treats it as a retrieval signal. Declaring it “more important” than sitemap.xml isn’t a hot take. It’s a tell—not to say brain rot. It tells you the person’s understanding of search was built entirely on whatever their dashboard showed them that morning. When you delegate your thinking to a dashboard, you don’t win. You lose. You just don’t know it yet because the dashboard hasn’t told you.

The competence cliff

Project forward three to five years. The current cohort of seniors, the people who built their pattern recognition through years of manual work, will still be in the market. Some will have moved into leadership. Some will have left the industry. Natural attrition.

Behind them, there should be a cohort of mid-levels who’ve spent the last few years building their own operational experience, preparing to step into senior roles. But that cohort is thinner than it should be, because the work that would have developed them has been automated or eliminated. They have tool proficiency. They have framework knowledge. They have, in many cases, genuinely good strategic instincts. What they lack is the accumulated reps — the dozens of migrations, the hundreds of audits, the thousands of individual problems encountered and resolved — that turn good instincts into reliable judgment.

When organisations try to hire senior practitioners to replace the ones who’ve left, they’ll find the pool is smaller than expected. Not because people left the industry, but because fewer people were developed into seniors in the first place. The pipeline wasn’t cut off — it was narrowed, quietly, one automated task at a time.

And the organisations that narrowed it will be the same ones wondering why they can’t find experienced hires. They’ll blame the “talent shortage.” They always do. It’s never “we destroyed the pipeline.” It’s always “the market is tough.”

“But AI makes everyone more productive”

The strongest counterargument goes like this: AI doesn’t eliminate learning, it accelerates it. Juniors can use AI to handle the mechanical parts and spend more time on the analytical parts. They’ll learn faster, not slower, because they’re not wasting time on repetitive tasks.

This is the argument people make when they’ve already decided to cut headcount and need a narrative that makes it sound like a favour to the people they didn’t fire. I find it unconvincing for a specific reason: it assumes you can separate the mechanical from the analytical, and that the mechanical part has no educational value. In practice, the boundary between “tedious task” and “learning experience” is invisible at the time. You don’t know which crawl review will be the one where you notice the pattern that shapes how you think about crawl efficiency for the next decade. You can’t predict which manual audit will be the one where something unexpected teaches you how a specific CMS handles canonical tags. The insight emerges from the repetition. Remove the repetition, and you remove the conditions for the insight to occur.

There’s also a selection bias that nobody in the “AI accelerates learning” camp wants to acknowledge. The people saying “AI would have made me learn faster” are people who already learned the hard way. They’re projecting backwards from a position of existing expertise, imagining how they’d use AI with the judgment they already have. A junior doesn’t have that judgment yet. That’s the whole problem. It’s like a chef saying a food processor would have sped up their training. Maybe. But you still need to learn what properly diced onions look like before the machine is useful. You can’t skip to “efficient” without going through “competent” first.

Can AI be a useful learning tool? Sure. In the same way that having a knowledgeable colleague explain something is useful. But it’s a supplement to doing the work, not a replacement for it. And the current industry trend isn’t “use AI to learn faster.” It’s “use AI to do the work instead of hiring someone to learn by doing it.” Those are very different things, and the people conflating them usually have a tool to sell or a headcount reduction to justify. Often both.

What this means for practitioners

If you’re early in your career: do the work manually, even when you don’t have to. Run the crawl yourself before asking the AI to summarise it. Build the spreadsheet before using the automated report. The tool will always be there when you need efficiency. The operational understanding you build by doing it the slow way compounds over your entire career. Ignore the LinkedIn seniors telling you to skip the part they didn’t skip. They got theirs. They’re not thinking about whether you’ll get yours.

If you’re mid-career: you’re in the last generation that got the full apprenticeship. That’s an asset, and it’s becoming a rare one. The pattern recognition you built isn’t replicable by tools, and it’s going to become scarcer. Your value isn’t in executing tasks faster. AI will always beat you there. Your value is in knowing which tasks matter, which outputs to distrust, and what the tool can’t see. Protect that. It’s worth more than whatever certification is trending this quarter.

What this means for the people making decisions

If you’re running a team or an agency: every junior role you eliminate or automate is a senior hire you won’t be able to make in five years. The savings are real and immediate. The cost is deferred and invisible — until it isn’t. Which, conveniently, means you’ll probably have moved on to a different role before anyone connects the cause to the effect. Very tidy.

The question isn’t whether AI can do the work juniors used to do. It can. The question is whether you have a plan for developing the judgment that doing that work used to create. If the answer is “they’ll figure it out” or “we’ll hire experienced people,” you’re banking on a pipeline that you’re actively draining. “We’ll hire experienced people” only works when someone, somewhere, is still developing them. If every organisation adopts the same strategy of automating junior work and hiring senior talent, the maths stops working. You can’t all fish from the same shrinking pond and act surprised when it’s empty.

Nobody’s going to notice the cliff until they’re standing at the edge. By then, the people who would have been your senior hires will be mid-levels with five years of tool proficiency and two years’ worth of actual reps.

Seniority isn’t a title you earn by surviving long enough. It’s a capability you build by doing the work. Repeatedly, imperfectly, and in enough different contexts to recognise the next problem before it fully arrives. There’s no shortcut. There never was. And the industry is about to learn that the hard way.