AI Saved You Time. Your Boss Noticed.

How efficiency gains became workload increases, and nobody adjusted the headcount.

Researchers at UC Berkeley spent eight months following 200 employees at a US technology company. Not a two-week pilot. Not a survey where everyone ticks “strongly agree” on “AI has improved my productivity” because their manager is cc’d. Actual ethnographic observation: watching people work, monitoring internal comms, conducting over 40 interviews. The study ran from April to December 2025, and the findings, published in Harvard Business Review earlier this month, confirmed what anyone paying attention already knew.

AI didn’t reduce work. It intensified it.

Workers didn’t actually benefit from saved time. They absorbed more tasks, juggled more threads, expanded their working hours — voluntarily. Nobody asked them to. The tools made it possible, so they did it. Then the cycle kicked in: AI accelerated tasks, which raised speed expectations, which increased AI reliance, which widened scope, which expanded the overall density of work. One participant nailed it: you thought maybe you’d work less, but you don’t work less.

If you work in SEO, none of this should surprise you. You’ve been living it. You just haven’t had a peer-reviewed paper to wave at your manager when they ask why the AI tools haven’t freed up your calendar.

The stack only grows

The Berkeley researchers found that when AI fills knowledge gaps, workers absorb responsibilities that previously belonged to other people. Product managers started writing code. Researchers took on engineering tasks. Nobody redesigned the org chart. People just started doing things they technically could now do, and the organisation quietly treated that as the new normal.

Sound familiar?

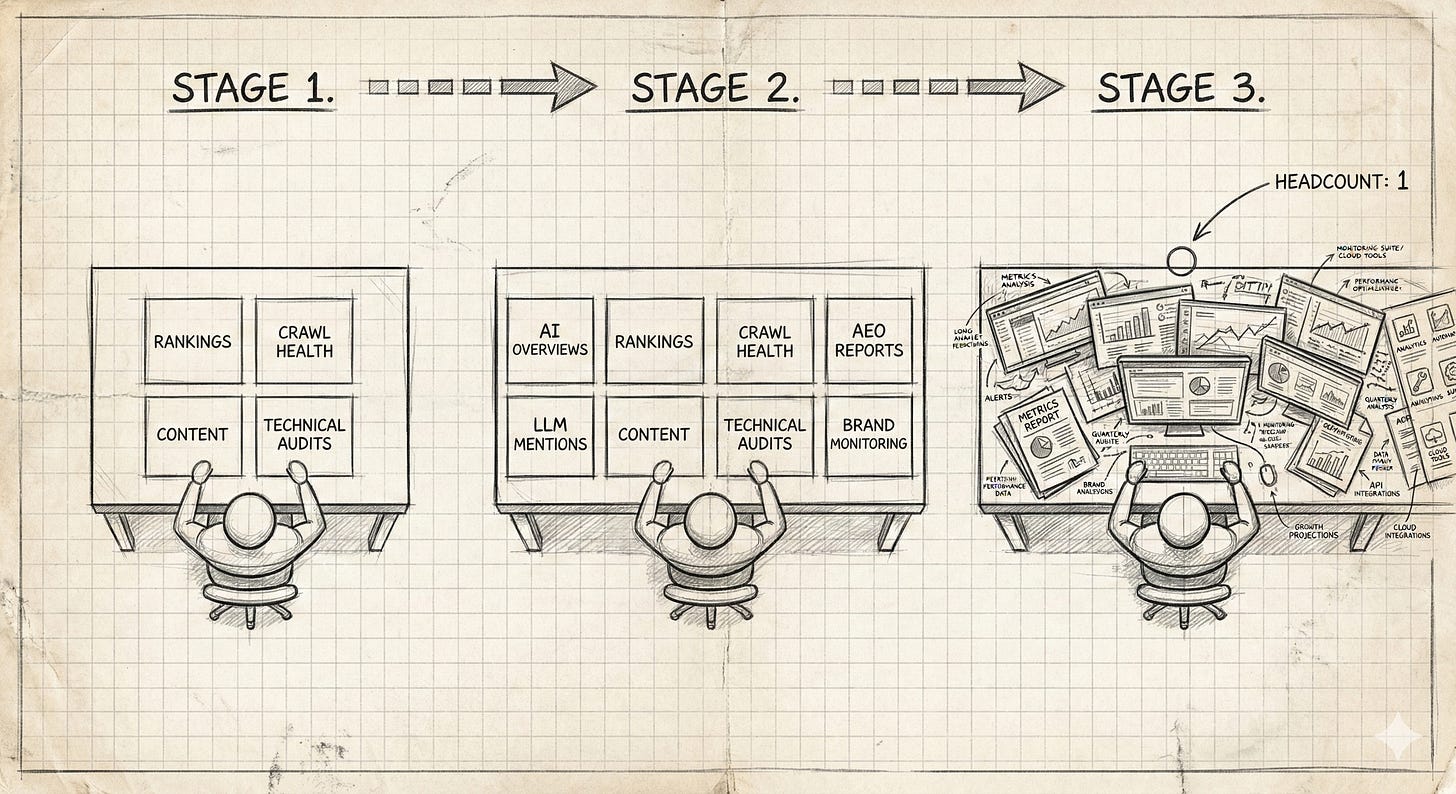

Two years ago, your job was organic search. Rankings, crawl health, content recommendations, technical audits. Large scope, but defined. Then AI Overviews showed up. Then ChatGPT started eating informational queries. Then Perplexity appeared. Then “AEO” became a conference track, because some folks on Dunning Kruger mountains of the SEO industry have never met an acronym they didn’t want to exploit. Then someone at the top read a Gartner forecast about a 25% decline in traditional search and forwarded it to your Slack channel with “thoughts?”

Nobody removed anything from your plate. They just added surfaces. You’re now expected to monitor organic rankings, AI Overview inclusion, LLM brand mentions, and whatever retrieval surface launched this quarter; with the same headcount, the same budget, headed by someone who genuinely believes AI made your job easier because they saw a viral LinkedIn post about it.

The Berkeley study calls this “task augmentation.” I’d call it scope creep wearing some lipstick.

Fifty articles and a QA problem

The content side is where this gets ugly.

A team that used to write 10 articles a month can now “produce” 50 because AI drafts are fast. Brilliant. The writing bottleneck vanished. Pop the champagne! Except someone still has to review those 50 pieces. Fact-check them. Make sure they don’t hallucinate product claims or cannibalise existing pages. Ensure they’re not just five variations of the same article with different meta titles. Check that the brand voice survived whatever the machine decided the brand voice was.

The person who used to be a content strategist is now a full-time QA reviewer. The person who used to write is now editing machine output; a fundamentally different skill that nobody trained them for… And nobody wants to do. The creative part of the job? The part that made people choose this career? Well… It got replaced by an endless review queue. Congratulations on your efficiency gains.

And the output expectations ratcheted up permanently. Once leadership saw “50 articles a month” on a dashboard, there was no going back to 10. The machine made it possible, so now it’s the baseline. Try telling your VP of marketing that 10 well-researched pieces would outperform 50 AI-drafted ones. I’ll wait.

The Berkeley researchers documented exactly this pattern: AI created a new rhythm. The feeling of momentum was real. So was the cognitive fatigue, the burnout, and the weakened decision-making that followed. But those don’t show up on the dashboard, so they don’t exist.

The reporting tax

The intensification isn’t just in doing the work. It’s in performing the work.

When your CEO reads about AI search — which they will, because the vendor ecosystem makes sure of it — and asks “what’s our AI visibility strategy?”, someone has to produce an answer. That answer requires new tools, new metrics, new reports, new decks. None of which replaced the existing reporting stack. It all piled on top.

You’re still tracking keyword rankings. Still pulling organic traffic data. Still producing the monthly performance report that three people skim and nobody acts on. But now there’s also a “brand mentions in LLMs” slide, an “AI Overview inclusion rate” tracker, and someone’s asked about “share of voice in Perplexity” — a metric that means approximately nothing but sounds boardroom-ready. Each metric comes from a different tool, measured differently, with caveats nobody reads and confidence intervals nobody asks about.

The Berkeley study found that workers described “a sense of always juggling, even as the work felt productive.” In SEO, the juggling isn’t just tasks; it’s entire measurement frameworks running in parallel, none of which are compatible, most of which are telling you different things, and all of which need a slide by Thursday.

“Vibe working” is a tell

The timing of this research landing alongside Anthropic’s launch of Claude Opus 4.6 is almost too neat. Anthropic’s head of product for enterprise told CNBC they’re entering a “vibe working” era — extending the “vibe coding” concept to all professional work. Describe what you need at a high level, the machine delivers production-ready outputs, everyone goes home happy. Or at least goes home.

I want to take that phrase seriously for a moment, because it reveals more than intended.

“Vibe coding” became popular because it described a real phenomenon: people generating functional code by describing what they wanted, without fully understanding the implementation. Useful shorthand. Also an admission that the person using the tool couldn’t always verify what the tool produced. The “vibe” was the part that replaced comprehension.

Extending this to “vibe working” means extending that trade-off to SEO analysis, research, project management, content strategy. You describe the vibe of what you want, the machine produces something that looks right, and you ship it. The less you understand the domain, the more you depend on the vibe. The more you depend on the vibe, the less you notice when the output is wrong.

For SEO, this is the Competence Cliff I wrote about previously, wearing product marketing lipstick. When the vibe replaces the understanding, and the review queue replaces the craft, you end up with people who can produce more but verify less. The Berkeley research tells us those same people will burn out faster while doing it. Efficient all around, really.

The competitive ratchet

There’s a mechanism in the Berkeley findings that deserves its own section because it maps onto the SEO industry like it was written about us.

When your competitor uses AI to publish 200 pages of “optimised” content a month, standing still feels like falling behind. When the agency down the road starts offering “AI visibility audits” as a service line, not offering one feels like a gap in your deck. Nobody formally raises expectations, but informal norms shift fast. Within months, what AI makes possible becomes what’s expected. What’s expected becomes what’s sold. What’s sold becomes what’s measured. And suddenly everyone’s busy with work that didn’t exist eighteen months ago and nobody’s stopped to ask whether it’s producing value or just activity.

The endgame of this logic is someone on X proudly announcing they just bought a full “Programmatic SEO” engine for $9. Nine dollars for a system that generates pages at scale. My response: buy a spam penalty for $9. But that’s where the ratchet leads: when output is cheap enough, people stop asking whether the output is worth producing. They just produce it, because not producing it feels like losing.

The SEO industry has been here before. The content farm era. The link-building volume game. Each time, the tools made it possible to scale output, the industry treated the new scale as the new standard, and the whole thing continued until Google corrected the market with an algorithm update and everyone pretended they’d known all along it was unsustainable. Same cycle. Different vocabulary. The AI tools make it possible to produce more content, monitor more surfaces, generate more reports, and track more metrics. So now everyone does. And nobody’s asking whether the additional output produces additional value, because the dashboards look fuller and the activity metrics are up and the LinkedIn posts about “10x productivity” are getting engagement.

The Berkeley researchers call the eventual result “unsustainable intensity.” SEO practitioners call it Tuesday.

What the efficiency story skips

The industry conversation about AI and SEO is stuck between two poles. Vendors selling efficiency. Practitioners worrying about replacement. Both miss what’s actually happening on the ground.

AI isn’t making SEO teams more efficient. It’s making them do more things. The per-task time dropped, but the task count exploded, and the cognitive load of managing, reviewing, and reporting on all of it is higher than before. A 2025 industry survey found that over 40% of SEO professionals said creating original content was their most time-consuming task. The industry’s proposed solution? AI content tools. More content to manage, more pages to audit, more cannibalisation risks to monitor. The time-per-article dropped. The total time spent on content went up. Nobody put that in the case study.

My read: the organisations getting this right are the ones treating AI as a way to do the same work better, not more work faster. That means being deliberate about what you automate and what you don’t. It means resisting the gravitational pull of scaling output just because scaling is now cheap. And it means saying (out loud, in a meeting, to people who don’t want to hear it) that the new tools created new work, not free time.

Don’t publish 50 AI-drafted articles just because you can. Don’t add a sixth monitoring dashboard without retiring the third. Don’t let “what’s our AI strategy?” become a quarterly reporting theatre exercise that generates decks but changes nothing.

The time you saved was real. It was never yours to keep. Your boss noticed, the scope expanded, and now you’re doing your original job plus the one the machine created. The tool got faster. You got busier. The vendors made up new vanity metrics they pinky-swear are valuable. And somewhere in a pitch deck, that’s being called “productivity.”