You’re Not Scaling Content. You’re Scaling Disappointment.

The tools keep changing. The wall they crash into doesn’t.

Every few years, the SEO industry discovers a new way to mass-produce content and convinces itself that this time it’ll work. That the sheer volume of pages will overwhelm Google’s ability to assess quality. That if you just publish enough, the numbers will carry you.

It never works. It has never worked. And the people selling you these approaches know it has never worked. They just need it to work long enough to collect the invoice.

The pattern has a name. It’s called “not learning.”

Let’s walk through the timeline, because apparently we need to do this again.

2008–2011: Content spinning. The pitch was simple: take one article, run it through software that swaps synonyms, and suddenly you have fifty “unique” articles. The word “unique” was doing a lot of heavy lifting in that sentence. These articles read like someone had fed a dictionary through a blender. But even if the output had been polished, the premise was broken. Here’s what the content spinners never grasped, and what their successors still don’t: uniqueness is trivially easy to produce. A monkey dropping its hands on a keyboard produces unique content. The string of characters has never existed before—congratulations, it’s original. The hard part was never uniqueness. It was producing uniqueness that’s worth something. Unique and valuable are not synonyms, and the gap between them is where every scaling strategy falls apart.

Google tolerated it for a while. Their systems simply hadn’t caught up yet. Then Panda arrived in February 2011, hit nearly 12% of all search queries, and content farms watched their traffic evaporate overnight… I was “fortunate” enough to watch it happen in real time. Demand Media, the poster child of the content-farm model, reported a $6.4 million loss the following year.

The lesson was supposed to be clear: you cannot industrialise quality. Volume without substance is a liability with a longer tail than most budgets can absorb.

2015–2022: Programmatic SEO. The pitch evolved. Instead of spinning existing articles, you’d build templates and fill them with structured data. “Best [X] in [City]” pages, generated by the thousand, each one a thin wrapper around a database query. Some of these actually provided value—if the underlying data was good and the template served genuine user needs. Most didn’t. Most were just doorway pages wearing a better outfit. Google spent years refining its ability to detect and demote templated content that existed primarily for indexing purposes rather than for humans.

The lesson was supposed to be reinforced: scale works when there’s substance underneath. Without it, you’re just building a bigger target.

2023–present: AI-generated content at scale. And here we are again. Same pitch, shinier tools. “We can produce 500 articles a month!” Wonderful. Can you produce 500 articles a month that are worth reading? That contain something a reader couldn’t get from the results already in the index? That demonstrate any form of expertise, experience, or original thought?

No? Then you’re not scaling content. You’re scaling your crawl budget waste.

And the pattern recognition failures are stunning. (This wasn't subtle. Several of us noticed. No, we weren’t impressed.)

I recently came across an AI visibility tool—one that sells itself on helping you get discovered by AI systems—that had generated hundreds of pages following the pattern "best SEO agencies in {city}." Déjà vu. Anyone who lived through programmatic SEO recognises this immediately—it's the 2017 playbook, except now the copy is written by an LLM. The template got a grammar upgrade and an "it's AEO" stamp. The strategy didn't.

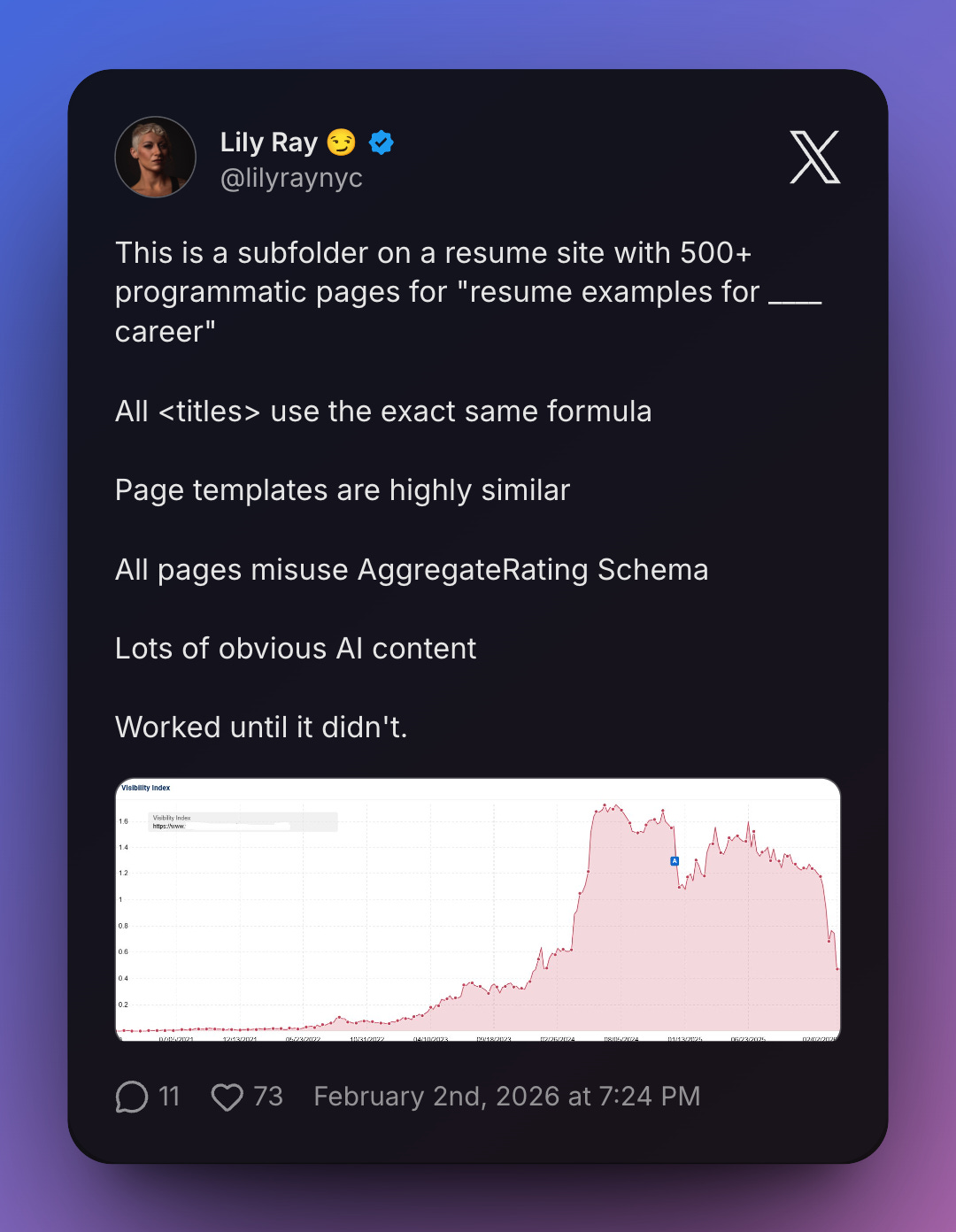

Lily Ray flagged a similar case: a resume site with 500+ programmatic pages for “resume examples for {career}.” Every title following the exact same formula. Near-identical page templates. Misused AggregateRating schema. Obvious AI content throughout. Her summary was three words: “Worked until it didn’t.”

That phrase should be tattooed on every content scaling pitch deck. Worked until it didn’t. It always does. And then it doesn’t.

The irony of an AI optimisation tool using mass-generated doorway pages to build its own visibility would be funny if it weren’t so perfectly on-brand for this industry.

The qualitative wall doesn’t move

Here’s what every generation of content scalers fails to understand: Google doesn’t evaluate content in isolation. It evaluates content relative to everything else in the index on the same topic.

Publishing 500 AI-generated articles about mortgage rates doesn’t make you an authority on mortgage rates. It makes you the 500th source saying the same thing in slightly different words. And Google already has 499 of those. It doesn’t need yours.

The qualitative wall is this: there is a minimum threshold of genuine value—original insight, lived experience, specific expertise, something the reader cannot get elsewhere—below which no amount of volume helps you. You can publish a million pages below that threshold. You’ll rank for nothing that matters.

And it gets worse. For the people scaling AI content specifically to gain visibility in AI-powered answer systems, the volume strategy doesn’t just fail, it actively backfires. A 2025 paper on retrieval evaluation for LLM-era systems introduces a metric that measures both helpful and distracting passages in retrieval. The finding that matters here: low-utility content doesn’t sit quietly in the index waiting to be ignored. It can pull retrieval models off-track, degrading the quality of answers those systems produce. Your 500 thin articles aren’t just invisible. They’re noise. And if your site also has genuinely useful pages buried in that noise, congratulations—you’ve built your own interference pattern. The volume you thought would help discovery is actively drowning the pages that might have earned it.

This isn’t a new insight. It’s the same insight that content spinners ignored in 2010, that programmatic SEO factories ignored in 2018, and that AI content mills are ignoring right now. The tools got better at producing text. The text still has nothing to say.

Google told you. Repeatedly.

Google’s spam policies define scaled content abuse as generating pages “for the primary purpose of search rankings and not helping users.” They explicitly list “using generative AI tools or other similar tools to generate many pages without adding value for users” as an example. This is not subtext. It’s text.

In June 2025, Google began issuing manual actions specifically for scaled content abuse, targeting sites that had been mass-publishing AI-generated content. Sites across the UK, US, and EU received Search Console notifications citing “aggressive spam techniques, such as large-scale content abuse.” Complete visibility drops. Pages didn’t slide down the rankings; they vanished.

The August 2025 spam update continued the enforcement. Subsequent core updates have kept tightening the screws. Each time, the same profile gets hit: high volume, low substance, no editorial oversight.

And each time, the affected site owners acted surprised. As if Google hadn’t been telling them this for fifteen fucking years.

“But our content is ranking well”

This is my favourite delusion. I’ve seen it at every stage of this cycle. “Our AI content is ranking, so it must be fine.” Claiming “this is ranking well” is often precisely why Google issues algorithmic improvements and manual actions for your site. If your low-value content is ranking, the system hasn’t gotten to you yet. That’s all it means.

Google aggregates signals at the site level, not just the page level. You can have individual pages performing while the overall quality signal of your site degrades. And when the enforcement catches up (algorithmically or manually) it doesn’t pick off pages one by one. It hits the lot.

This is the content spinner’s fallacy, recycled: “It’s working right now, so it must be a strategy.” Demand Media’s content was ranking too. Right up until it wasn’t.

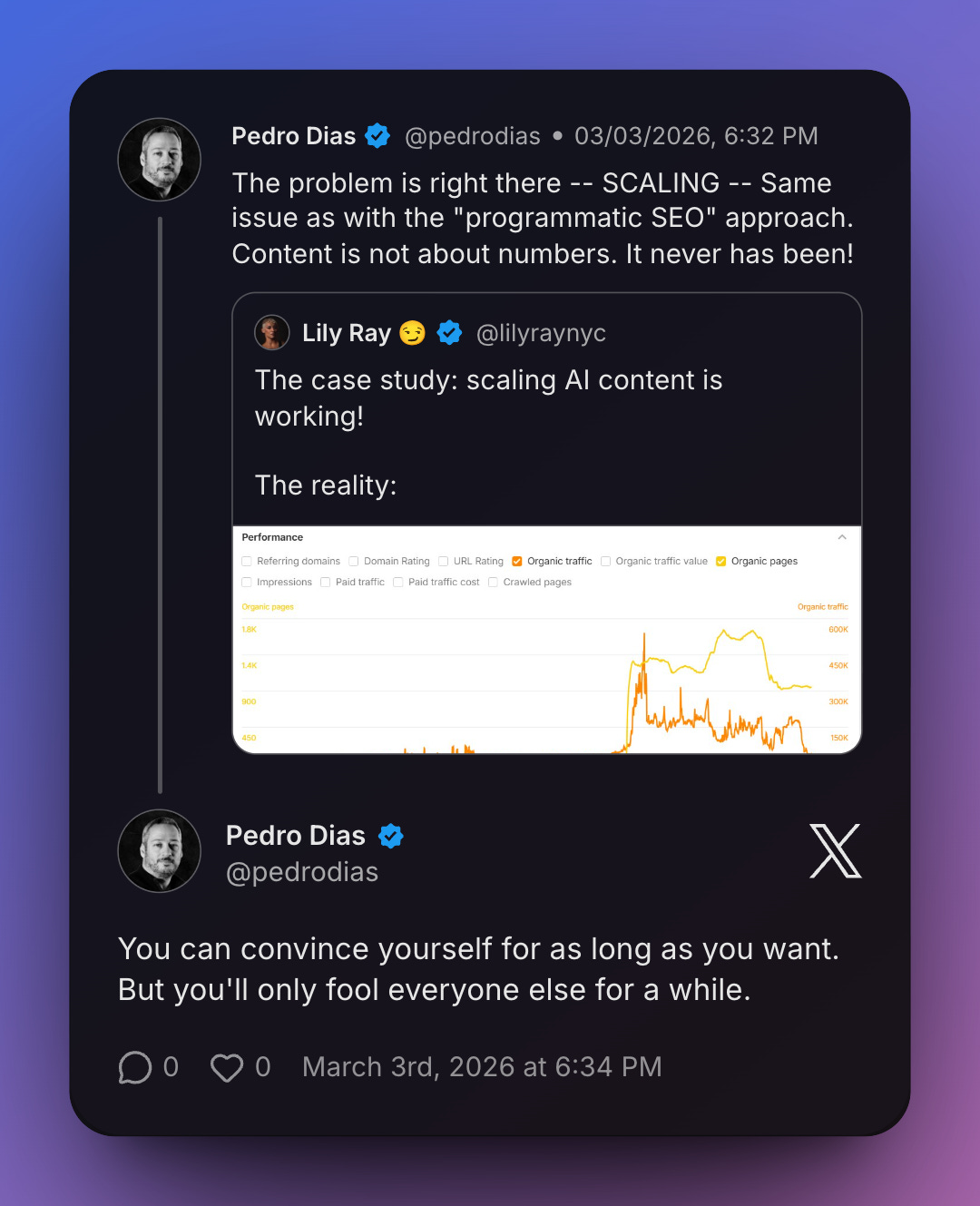

Lily captured this perfectly: “The case study: scaling AI content is working! The reality:” —followed by the traffic cliff that inevitably arrives. Every scaling success story is a snapshot taken before the correction. Nobody publishes the sequel.

The economics don’t even make sense

Set aside the risk for a moment. Let’s talk about what you’re actually producing.

Five hundred AI-generated articles a month. Each one needs to be reviewed for accuracy—because LLMs hallucinate, and publishing incorrect information is a liability that extends well beyond SEO. Each one needs to be checked for originality—because if it reads like everything else in the index, it provides no added value; no competitive advantage. Each one needs editorial oversight to ensure it actually serves the audience you claim to serve.

If you’re doing all of that, the cost just moved—and possibly increased—while you convinced yourself you were being efficient. The “efficiency” of AI content generation evaporates the moment you apply the quality standards the content actually needs to meet.

And if you’re not doing any of that? You’re publishing unreviewed, unoriginal, potentially inaccurate content at scale under your brand name. I genuinely do not understand how anyone signs off on that.

Same mistake, better tools

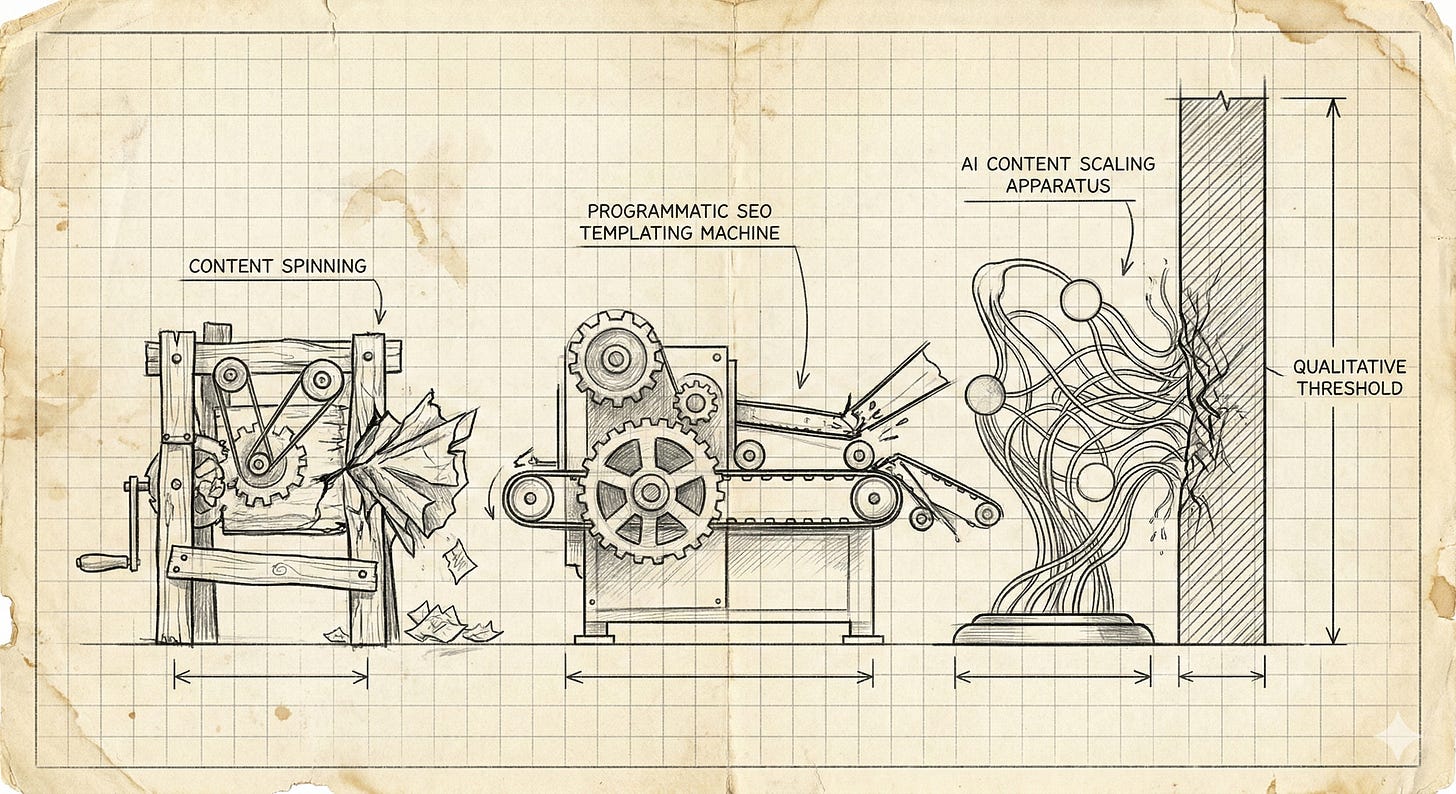

Content spinning. Programmatic SEO. AI-generated content at scale. Three different tools, one identical mistake: treating content as a manufacturing problem.

Manufacturing produces identical outputs at scale—that’s the point. Content derives its value from the opposite: from being specific, from being informed by experience, from saying something the rest of the index doesn’t. Every attempt to industrialise it crashes into that contradiction.

You can’t automate specificity. You can’t template experience. You can’t generate original thought by running a prompt through an LLM and hoping something useful comes out. And these constraints won’t be solved by the next model release. They’re baked into what makes content worth reading in the first place.

The people who keep chasing scale are optimising for the wrong variable. They see “more content” as an input that produces “more traffic” as an output. But the function is not linear. It never was. It’s gated by quality, and no amount of volume bypasses the gate.

The only question that matters

Before you publish anything (AI-assisted or otherwise) ask one question: what does this page offer that the reader cannot already get?

If the answer is “nothing, but we’ll have more pages indexed,” you’re not building a content strategy. You’re building a liability. And you’re doing it with the confidence of someone who has apparently never heard of Panda, never looked at what happened to programmatic SEO sites in 2022, and never read Google’s own spam policies.

You can convince yourself for as long as you want. But you’ll only fool everyone else for a while.

The wall is still there. It’s always been there. The tools keep changing. The wall doesn’t.